If you think your company is still "waiting to adopt AI," you're likely mistaken. The reality of 2026 is that your employees have already made the decision for you.

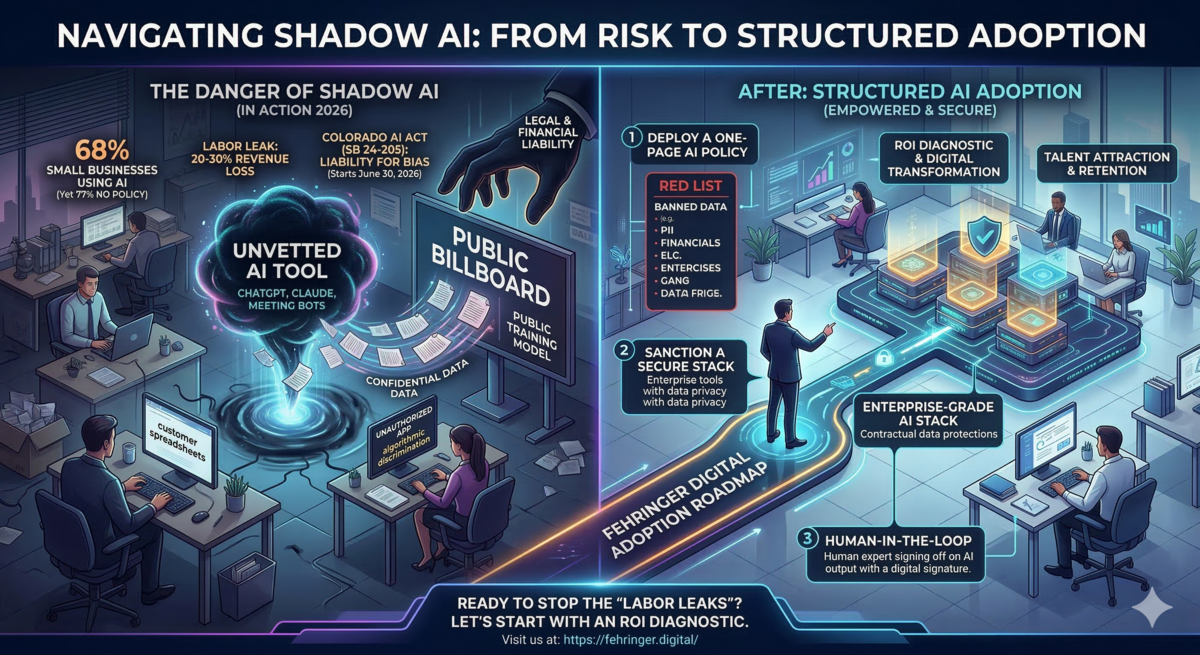

In the industry, we call this Shadow AI: the unauthorized use of artificial intelligence tools by staff members who are simply trying to keep up with their workloads. They aren't trying to be malicious — they are trying to be efficient. They are using unvetted, consumer-grade tools to summarize contracts, draft client emails, analyze spreadsheets, and debug code. And they are doing it right now, on your network, with your data.

"Doing nothing is not a neutral stance. It is an active move in the wrong direction."

The Danger of the "Quiet Corner"

While your team's willingness to innovate is an asset, letting them do so in the shadows is a massive liability. When an employee pastes a proprietary customer spreadsheet or a confidential deal memo into a free, public AI tool, that data can become part of the model's training data — effectively turning your private intellectual property into a public billboard.

The consequences range from uncomfortable to catastrophic depending on what was shared:

- Legal exposure — confidential client data shared with third-party AI tools may violate NDAs, data processing agreements, or industry regulations like HIPAA and GDPR.

- Competitive intelligence loss — pricing models, product roadmaps, and unreleased strategy documents can be ingested and later surfaced to competitors.

- Compliance violations — Colorado SB 24-205, effective June 2026, introduces new requirements for transparency and accountability around AI systems used in business decisions.

- Reputational damage — a single data breach traced back to unauthorized AI usage can permanently damage client trust in ways that are very difficult to recover from.

Why Employees Default to Shadow AI

Before you blame your team, it is worth understanding why this happens. In my experience advising enterprise organizations on AI transformation, the root cause is almost never recklessness — it is a productivity gap.

When employees face a 48-hour turnaround on a manual task that a consumer AI tool can complete in four minutes, the choice becomes obvious. If your organization has not provided a sanctioned, secure alternative, you have not actually given your team a real choice. You have simply made the unsanctioned option the only practical one.

This is the defining characteristic of Shadow AI: it flourishes wherever the gap between employee need and organizational capability is widest. The larger that gap, the more data flows into unmanaged channels — and the more liability accumulates without anyone realizing it.

The Governance Framework: From Shadow to Architected

To reclaim your margin and protect your data, you must move from "unauthorized use" to "architected workflows." This is not about restricting your team — it is about channeling their drive to innovate into environments where that innovation is safe, measurable, and aligned with business objectives.

A functional AI governance framework operates across four layers:

Layer 1 — Audit & Visibility

You cannot govern what you cannot see. The first step is a full Shadow AI audit: which tools are being used, by which teams, and what categories of data are being input. This audit typically surfaces 3–5x more unauthorized tool usage than leadership expects — and that gap is exactly where your liability lives.

Layer 2 — Policy & Rules of Engagement

A formal AI Acceptable Use Policy defines which tools are sanctioned, what data classifications are permitted as AI inputs, and what the consequences of non-compliance are. This policy needs to be specific. Generic "no unauthorized tools" language is routinely ignored. Teams need to know exactly what is permitted, what is prohibited, and why — in plain language.

Layer 3 — Secure Enterprise Environments

Policy without infrastructure is wishful thinking. Organizations need to deploy enterprise-grade AI environments — private RAG (Retrieval-Augmented Generation) systems, isolated LLM instances, or vetted platforms with Business Associate Agreements in place. When employees have a fast, capable, sanctioned tool available, Shadow AI usage drops dramatically because the incentive to go outside the system disappears.

Layer 4 — Ongoing Measurement & Adoption

AI governance is not a one-time project — it is a continuous practice. Usage analytics, quarterly policy reviews, and active change management programs keep the framework current as the AI landscape evolves and your team's capabilities mature. The organizations that treat governance as an ongoing discipline are the ones that stay ahead of both the opportunity and the risk.

"The goal is not to build a wall. It is to build a better road."

What This Looks Like in Practice

At a major web services company, a self-service digital architecture — built on structured workflows and governed content channels — replaced the equivalent workload of 20–30 traditional support agents, delivering over $800,000 in annual cost savings. That outcome was only possible because the underlying data environment was architected correctly from the start. Unmanaged inputs would have produced unreliable outputs, destroying both the ROI and the client trust the system was designed to protect.

The same principle applies at any scale. When the environment is secure and the workflows are intentional, AI becomes a genuine force multiplier. When it is unmanaged, it becomes a liability that compounds silently — until it doesn't.

The Bottom Line: Inaction Has a Price Tag

Shadow AI is not a future risk. It is a present condition in most organizations today. The question is not whether your employees are using unauthorized AI tools. The question is whether you have built the governance infrastructure to channel that usage productively — or whether you are accumulating liability with every workday that passes without a policy in place.

The cost of a governed AI environment is known, bounded, and recoverable. The cost of a Shadow AI data breach — legal, financial, and reputational — is none of those things.

Doing nothing is not a neutral stance. It is an active move in the wrong direction.